Ants in my (chan)s

Introduction

So, in February of 2020, something interesting happened for me ! I submitted a proposal for a Talk at Clojure/North. It was the first time I had ever even done this and I didn’t really expect to get selected. But I did! And preparing for the talk, learning so many new things along the way and participating in the conference that just concluded a few weeks back was a wonderful experience for me. And definitely something that has spurred me on in my Clojure journey.

This blog then is a 2 part series covering the things I talked about in my talk and some of the extra stuff that I didn’t get to talk about due to the time constraints. If a video/audio format works better for you, maybe you would like to go check out the talk first.

Why this topic ?

I have always been fascinated and tormented in equal parts by the notion of concurrency in programming. Fascinated because concurrency is really at the heart of computer programs emulating the physical world around us. Wherever you go, you see significant number of threads vying for and utilising resources from a shared pool. My colleague at Helpshift has summarised this beautifully in this blog on how we can model a typical government office like a multi-threaded system.

Tormented because, well concurrent programming is hard ! Its un-reliable, non-deterministic and an exercise in over engineering. Even as part of the mobile development team at Helpshift, I have always focussed more on the information processing part of the technology. Fetching data over un-reliable networks, processing and storing the data and communicating to the UI layer about said information when its available. All the while, aiming to keep the holy UI run loop as free as possible to draw those sweet 60 fps animations !

When I have started doing server side programming with Clojure, I have again been pulled towards my old fascination.

Topics covered

In part 1, we’ll start with a brief whirl wind tour of the concurrency support in iOS programming. We’ll look at how ObjC and Swift provide tools for writing multi-threaded code and what patterns iOS frameworks encourage. Then, we’ll cover the concurrency primitives and support that Clojure offers.

In part 2, I’ll try and share what I think are important learnings for any Swift developer to help write better concurrent code. And then we can do a walkthrough of the code that I have written for implementing the Ants colony simulation problem in Swift.

Concurrency in mobile programming

or, get out of my run-loop

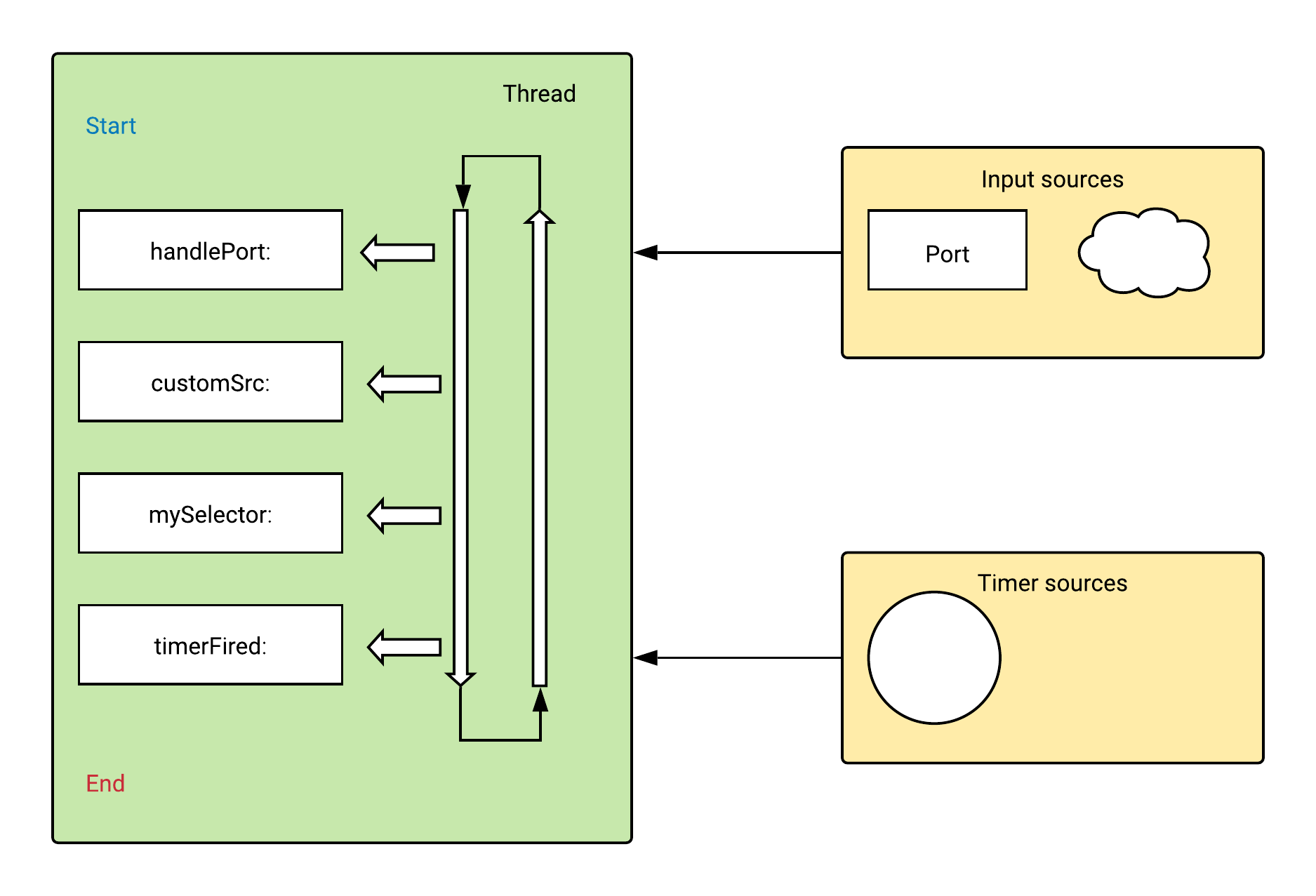

Run loops

In mobile app development, the world of concurrent programming revolves around something known as run-loops. A run loop is a piece of infrastructure used to manage events arriving asynchronously on a thread. A run loop works by monitoring one or more event sources for the thread. As events arrive, the system wakes up the thread and dispatches the events to the run loop, which then dispatches them to the handlers you specify. If no events are present and ready to be handled, the run loop puts the thread to sleep. UI drawing is carried out in a complicated infinite loop which supposed to be kept as far away from business logic as possible.

ObjC support

When I started out, and up until very painfully recently, the language of choice was ObjectiveC. The concurrency constructs in ObjC are fairly basic, at best! In Objc all objects are mutable by default.

@interface Person : NSObject

@property NSString *name;

@property (nonatomic) NSInteger age;

@end

@implementation Person

- (instancetype) initWithName:(NSString *) name

andAge:(NSInteger) age {

if (self = [super init]) {

self.name = name;

self.age = age;

}

return self;

}

Person *sarah = [[Person alloc] initWithName:@"Sarah" andAge:34];

sarah.name = @"This is a new name";

Properties of objects can be declared atomic which is essentially exclusive locks around getters and setters Here synchronised is exactly what you expect. Only one thread which synchronises on the same self can execute across multiple threads

// What atomic properties look like

- (NSString *) userName {

NSString *retval = nil;

@synchronized(self) {

retval = [[userName retain] autorelease];

}

return retval;

}

- (void) setUserName:(NSString *)userName_ {

@synchronized(self) {

[userName_ retain];

[userName release];

userName = userName_;

}

}

There are some ways to write saner code using immutable and mutable versions of basic data types. For example, if you look at this code which adds an element to an array. Here both the input and return objects are immutable arrays. Now since we can’t add anything to an NSArray, we create a mutable copy of it and add an element to it. While returning it, we make sure to return it as an NSArray again. Unfortunately, because of the way memory allocation works, idiomatic ObjC code seldom looks like this.

+ (NSArray *) append:(NSString *) element

onto:(NSArray *) oldArray {

// Now I want to add an element to the array

// this fails compilation because mutation

// methods dont exist on on NSArray

// [oldArray addObject:@"d"];

// so this is how we deal with it !

NSMutableArray *newArray = [oldArray mutableCopy];

[newArray addObject:@"d"];

// If you really care about immutability

return newArray;

}

Swift support

let and vars

Swift, being a more modern language is much better at helping write safe code. Immutability is supported first class via the use of let declarations. As you can see if the code here, anything that is declared a let, cannot be modified nor can it be reassigned. Function arguments, loop variables, optionally unwrapped values are all let, i.e immutable by default.

public func lookatImmutables(input: String) {

let aString = "Initial value"

// this is not allowed

aString.append("a suffix")

// this doesn’t work either

aString = "another string"

// but this does since the members are vars

let aPerson = Person(name: "Rhi", age: 34)

aPerson.name = "New name"

// function inputs cannot be modified

input.append("a suffix")

if let str = someOptionalString {

someString.replacingOccurrences(of: "::", with: "->>")

}

}

Reference and Value types

Swift also makes a clear distinction between reference types and value types. Reference types are mutable by default, value types immutable meaning a function which changes the self.properties is allowed for reference types, but for value types this has to be made explicit by using the mutating keyword. Reference types only pass around references to the object and any change made in a function will reflect across all references to that object. Structs meanwhile are treated as values, but beware. Swift does not prevent you from hitting yourself in the foot. If a member is declared as a var in the struct, it is still mutable.

public func lookAtReferenceAndValue() {

var aPerson = Person(name: "Rhi", age: 34)

let another = aPerson

aPerson.name = "New name"

print("Another is \(another.name)")

var aStubbornPerson = StubbornPerson(name: "Aruna", age: 32)

let anotherPerson = aStubbornPerson

aStubbornPerson.age = 33

print("Age of another person : \(anotherPerson.age)")

let anotherStubbornPerson = StubbornPerson(name: "Aruna", age: 32)

var someString = "Hi"

someString.replacingOccurrences(of: "::", with: "->>")

// anotherStubbornPerson.changeName(to: "Sarah")

// anotherStubbornPerson.age = 33

// anotherStubbornPerson = aPerson

}

Library support

Grand Central Dispatch

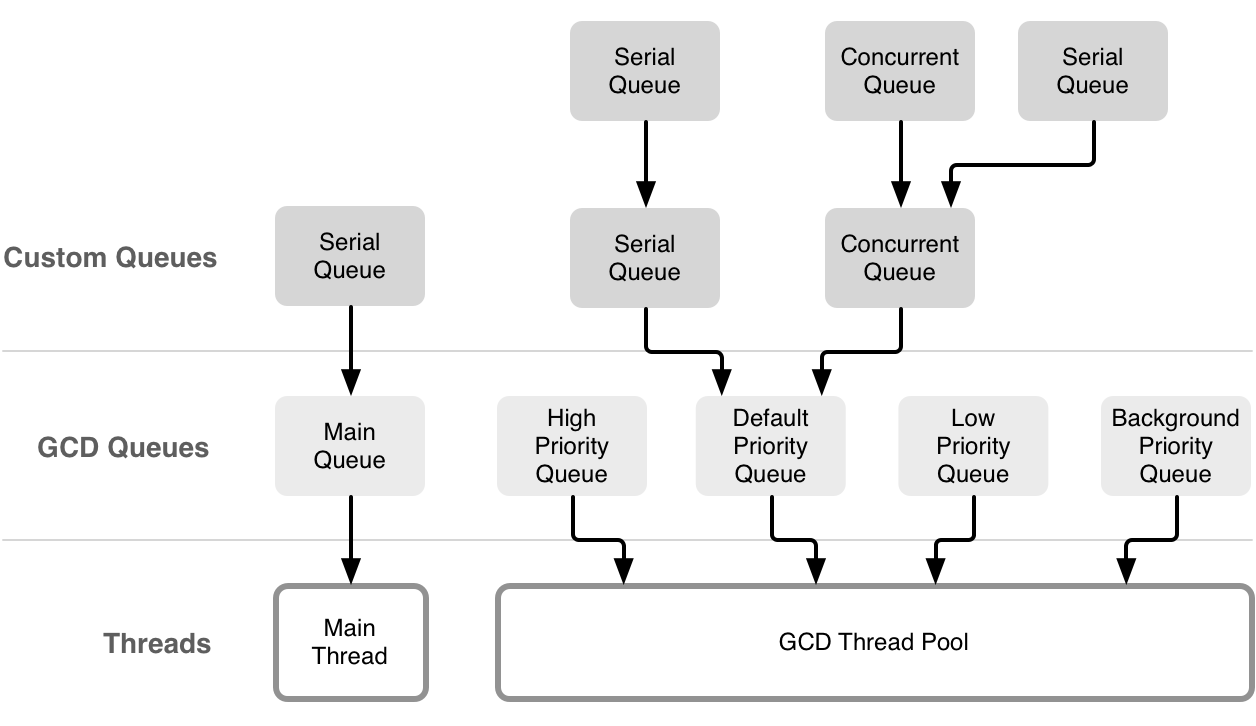

Concurrency support in iOS development comes via 2 main frameworks namely the Grand central dispatch system and the Operation queues APIs built on top of it. Grand Central Dispatch (GCD), contains language features and runtime libraries which provide comprehensive improvements to the support for concurrent code execution. With threads, the burden of creating a scalable solution rests squarely on the shoulders of you, the developer. You have to decide how many threads to create and adjust that number dynamically as system conditions change.

GCD takes the thread management code you would normally write in your own applications and moves that code down to the system level. All you have to do is define the tasks you want to execute and add them to an appropriate dispatch queue. GCD takes care of creating the needed threads and of scheduling your tasks to run on those threads. Because the thread management is now part of the system, GCD provides a holistic approach to task management and execution, providing better efficiency than traditional threads.

Dispatch queues are FIFO queues to which your application can submit tasks in the form of a block of code. Dispatch queues execute tasks either serially or concurrently. Work submitted to dispatch queues executes on a pool of threads managed by the system. Except for the dispatch queue representing your app’s main thread, the system makes no guarantees about which thread it uses to execute a task. You schedule work items synchronously or asynchronously. When you schedule a work item synchronously, your code waits until that item finishes execution. When you schedule a work item asynchronously, your code continues executing while the work item runs elsewhere.

The main queue and default global concurrent queues are also provided to the programmer. These default concurrent queues also have priorities namely,

.userInteractive.userInitiated.default.utility.background.unspecified

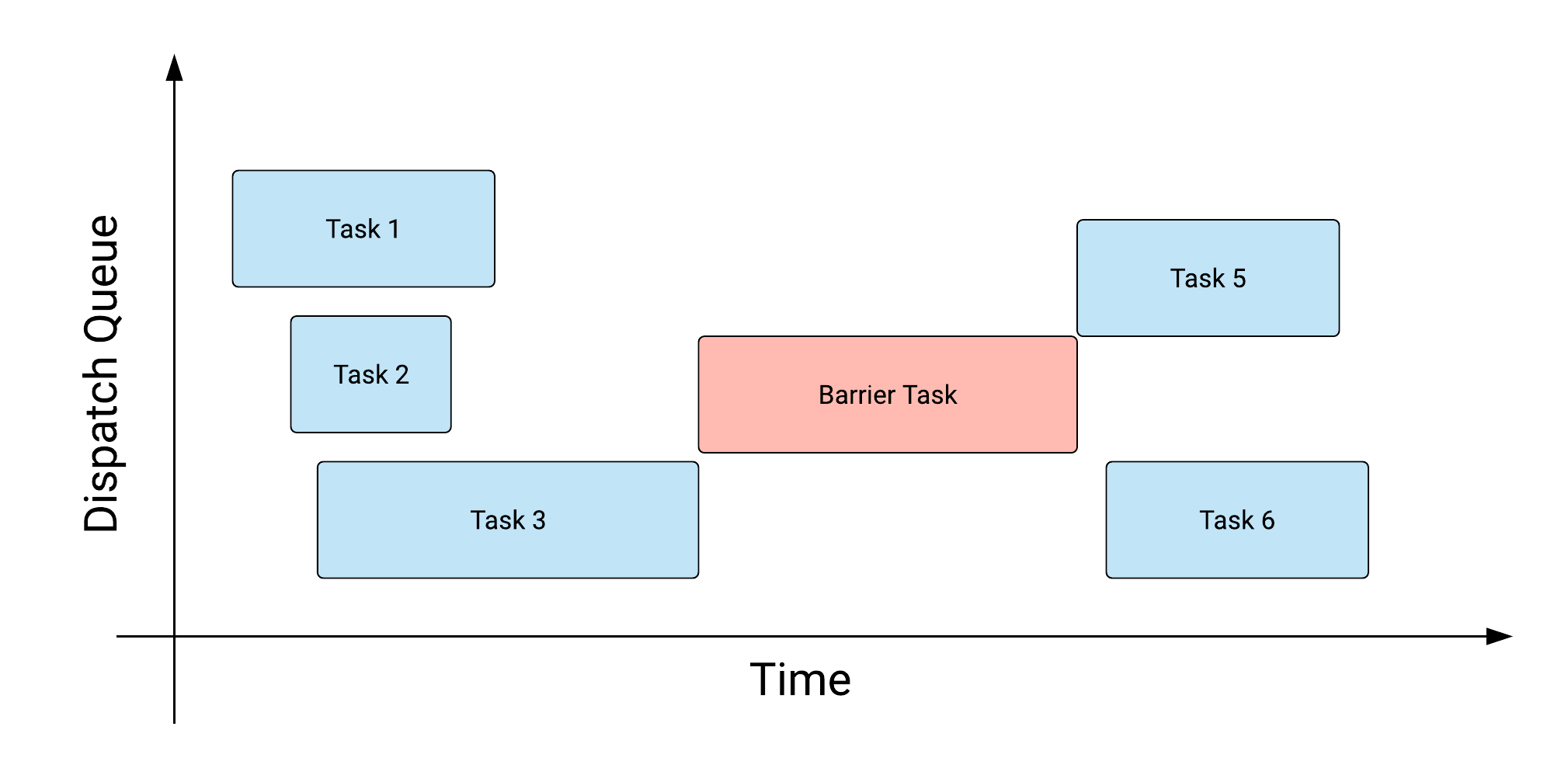

Dispatch Barriers

Let’s say you added a series of closures to a specific queue but you want to execute a job only after all the previous asynchronous tasks are completed. You can use dispatch barriers to do that. Barriers are a way to impose ordering on concurrent queues that normally don’t execute the registered tasks in a repeatable order. Dispatch barriers have no effect on serial queues

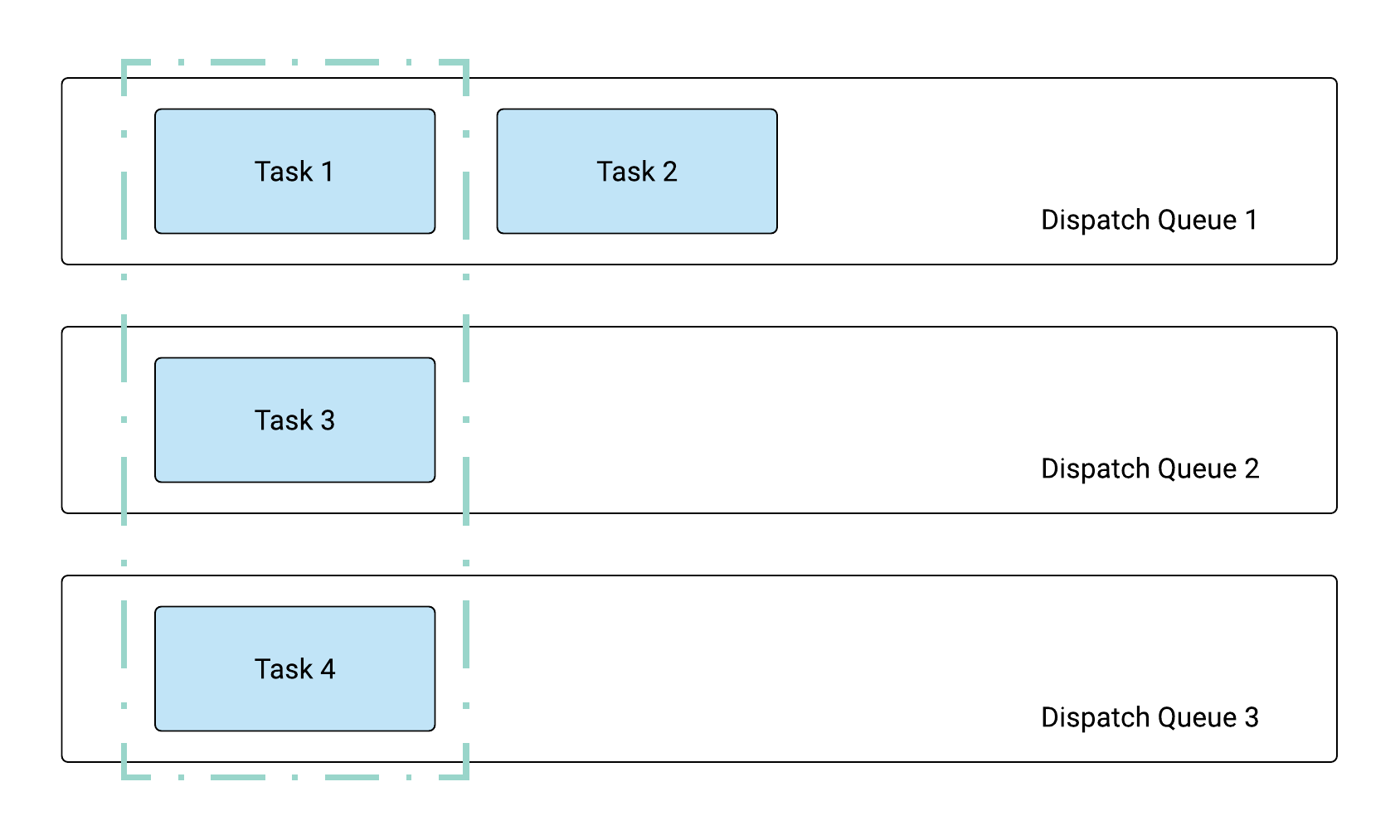

Dispatch Groups

If you have multiple tasks, which may be added to different queues, and want to wait for their completion, you can group them in a dispatch group. Let’s say you start a downloads for a bunch of URLs on different concurrent queues. But since they are all part of one logical operation, you want to be able to wait for all of them to be completed. You add them to the same dispatch group and then can get notified when all operations are done.

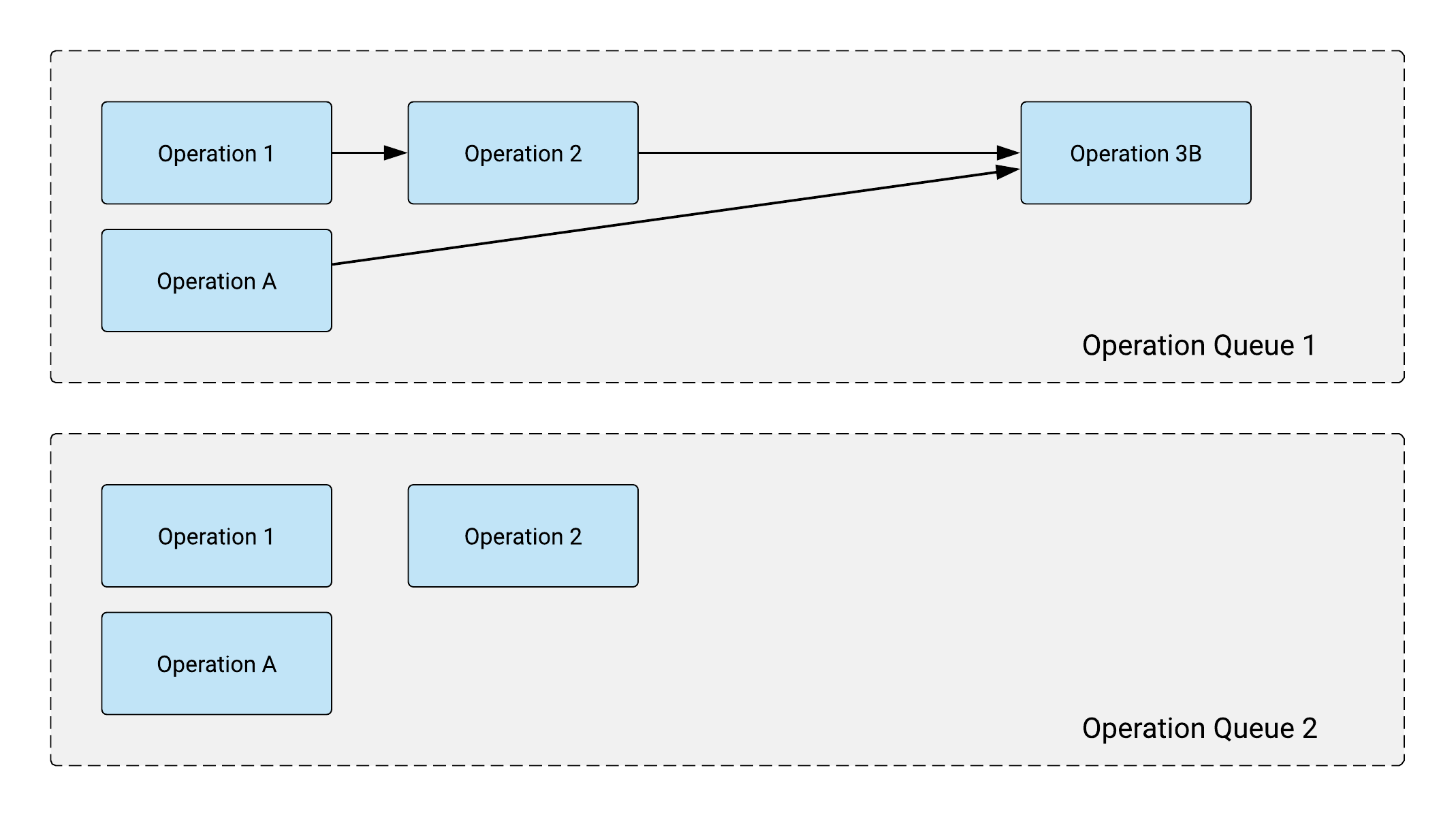

Operation Queues

Operations are a high level abstraction built on top of GCD. An operation object is an instance of the NSOperation class that you use to encapsulate work you want your application to perform. Operations support establishment of graph-based dependencies between operation objects. These dependencies prevent a given operation from running until all of the operations on which it depends have finished running. Dependencies are also not limited to operations in the same queue. Operation objects manage their own dependencies and so it is perfectly acceptable to create dependencies between operations and add them all to different queues. One thing that is not acceptable, however, is to create circular dependencies between operations. Doing so is a programmer error that will prevent the affected operations from ever running. Operations also support prioritisation of operations and thereby affecting their relative execution order. You also have support for canceling semantics that allow you to halt an operation while it is executing.

Your application is responsible for creating and maintaining any operation queues it intends to use. An application can have any number of queues, but there are practical limits to how many operations may be executing at a given point in time. Operation queues work with the system to restrict the number of concurrent operations to a value that is appropriate for the available cores and system load. Therefore, creating additional queues does not mean that you can execute additional operations.Dispatch queues themselves are thread safe. In other words, you can submit tasks to a dispatch queue from any thread on the system without first taking a lock or synchronizing access to the queue.

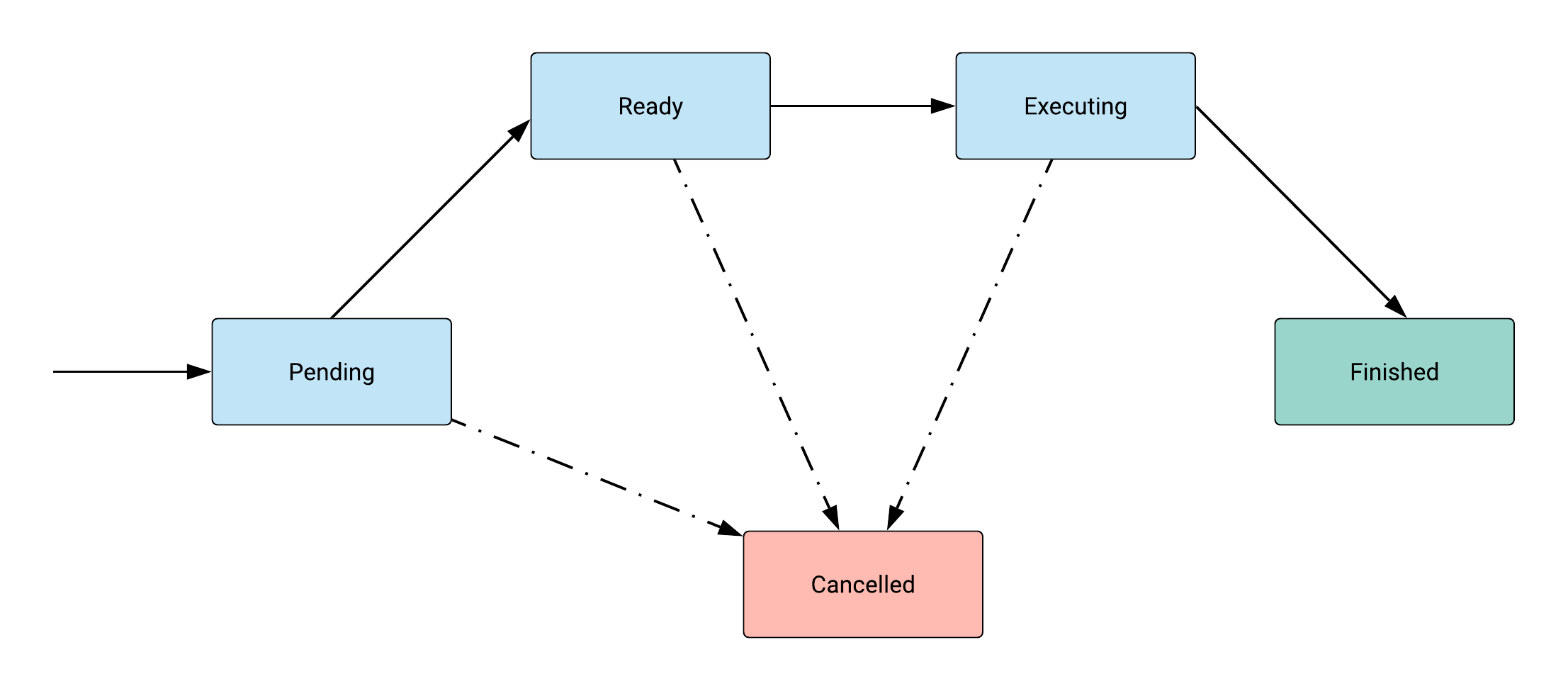

Operation state transitions

Operation Queues

Concurrency in Clojure

Immutable data structures are the building blocks of great concurrency support in Clojure. Once you get a hold of a map or a vector in a function, you are sure that nobody can mutate anything about that data ! You can only create new copies of the data by transforming it using functions like assoc, conj etc. But the original data remains as it is. Having highly performant implementations of such data structures makes Clojure optimal for server side workloads.

Futures : A Clojure future evaluates a body of code in another thread. future returns immediately, allowing the current thread of execution (such as your REPL) to carry on. The result of evaluation will be retained by the future, which you can obtain by dereferencing it. Clojure futures are evaluated within a thread pool that is shared with Agents. This pooling of resources can make futures more efficient than creating native threads as needed.

Delays : A delay is a construct that suspends some body of code, evaluating it only upon demand, when it is dereferenced. Delays only evaluate their body of code once, caching the return value. Thus, subsequent accesses using deref will return instantly, and not reevaluate that code. A corollary to this is that multiple threads can safely attempt to dereference a delay for the first time; all of them will block until the delay’s code is evaluated (only once!), and a value is available

Promises : Promises are distinct from delays and futures insofar as they are not created with any code or function that will eventually define its value. promise is initially a barren container; at some later point in time, the promise may be fulfilled by having a value delivered to it. Thus, a promise is similar to a one-time, single-value pipe: data is inserted at one end via deliver and retrieved at the other end by deref.

Atoms, Refs, Agents, CSP

Atoms : Atoms are the most pervasive concurrency primitive that I have seen in my short time as a Clojure developer at Helpshift. It is great a representing a single piece of information or a shared state. Atoms ensure that you can only write consistent values to this shared state. An Atom holds a current value. That value has to be valid. You then send it a pure function which calculates the next value. The Atom guarantees that anybody derefing the Atom always gets either the old value or the new value, and never anything in between. The pure function bit is important because if the compare-and-swap operation fails, the atom will retry the transformation until it succeeds

Agents : Like Atoms, Agents are uncoordinated. You can’t modify two Agents with any kind of guarantees. The difference is all about which thread does the work. swap! happens in the current thread of execution. send, on the other hand runs the computation on a separate thread and the calling function can continue immediately. send will process the computation in a thread pool. If your function does do lots of work, Clojure gives you a function called send-off, which runs each task in its own thread. Use that if you’re doing I/O or a lot of computation

Refs : Refs implement a Software transactional memory. Essentially, you start a bunch of co-ordinated changes involving multiple shared entities, but these are all isolated to everyone outside the transaction. Once the changes are calculated, the transaction either commits all the changes or fails and starts again. All the while the rest of the code is oblivious to these updates until the commit succeeds. Refs are an essential ingredient in the Ants simulation problem.

CSP : CSP allows the description of systems in terms of component processes that operate independently, and interact with each other solely through message-passing communication. core.async is an excellent clojure library which provides CSP style concurrency semantics. Pass data around via channels, use extremely light weight thread-like entities via go blocks and have the ability to block on a set of alternatives using alts!

What Swift devs can learn from Clojure

Immutability

- Prefer immutable data. Use lets instead of mutable vars. Wherever possible, use let declarations both in functions and in objects.

- let declarations in functions will help you avoid accidentally mutating a value.

- let declarations also help the Swift compiler optimise code since it knows certain data will not change

- let declarations in Swift structs is also critical if you want to protect against piecemeal state mutation. For example, as you can see in the code in the lower half, even though the Stubborn person is a struct and a value type, because its members are vars, we can do stuff like directly changing the age.

public struct StubbornPerson {

let name:String

let age:Int

public static func incrementAge(of person: StubbornPerson, by: Int) -> StubbornPerson {

return StubbornPerson(name: person.name, age: person.age + by)

}

}

public struct StubbornPerson {

var name: String

var age: Int

public mutating func changeName(name: String) {

self.name = name

}

}

var aStubbornPerson = StubbornPerson(name: "Aruna", age: 32)

aStubbornPerson.changeName(“New name”)

aStubbornPerson.age += 1

A better pattern is to have all members defined as let and then we can have functions which return new data by performing some update operation. Think of this as similar to an assoc for maps. Even though we don’t really have persistent data structures which are memory optimised, Swift’s memory management generally means that if your workload is not extremely heavy, these patterns will give you a lot more benefit in the long run.

public func lookAtImmutability () -> StubbornPerson {

let aStubbornPerson = StubbornPerson(name: "Sarah", age: 32)

let anAgingPerson = StubbornPerson.incrementAge(of: aStubbornPerson, by: 1)

return anAgingPerson

}

func testMapAssoc() {

let aMap = ["name":"some-name",

"designation":"programmer",

"company" : "helpshift"]

let mapWithJoiningDate = assoc(aMap, key: "joining", value: Date())

print("Map with joining date is \(mapWithJoiningDate)")

}

Value types

References to a class instance share data which means that any changes made by any reference will be visible to all other references. A struct, on the other hand is a value type and will create a unique copy for each new reference. With this, structs have the benefit of mutation in safety as you can trust that no other part of your app is changing the data at the same time. This makes it easier to reason about your code and is especially helpful in multi-threaded environments where a different thread could alter your data at the same time.

Expressive concurrency primitives

Futures

In addition, we can also do better by building some of the abstractions that we love from Clojure. Swift’s support for functional programming and a decent Collections library means that this is actually a very viable option for writing better concurrent code. So as you can see here, this is a simple implementation of a Future which can perform a long running operation on another thread and then give back the result for the calling code to consume.

func testFutures() {

let future = Future<Int> { () -> Int in

print("Starting hard calculation")

Thread.sleep(forTimeInterval: 2)

print("Long hard calculation done!")

return 42

}

print("Lets wait for the future to be realised!")

XCTAssert(future.deref() == 42)

}

Promises

Similarly, we can build Promises where one thread can calculate the value and the other thread waits for it to be available. Interestingly, ObjC’s unit test frameworks have had a similar pattern for quite a while now with XCTestExpectations and XCTest.waitForExpectations but this has never made it to the standard app development frameworks.

func testPromises() {

let promise = Promise<Int>()

DispatchQueue.global().async {

Thread.sleep(forTimeInterval: 2)

promise.deliver(value: 42)

}

print("Lets wait for the promise to be delivered!")

XCTAssert(promise.deref() == 42)

// Promises cannot be delivered more than once

promise.deliver(value: 10)

promise.deliver(value: 16)

XCTAssert(promise.deref() == 42)

}

Delays

Delays can also have an interesting use-case where some DB fetch can happen in a delay and if some runtime condition in the code actually never needs the value, it is never fetched. If required, the DB fetch will also happen only once.

func testDelay() {

var calc = Delay<String> {

Thread.sleep(forTimeInterval: 2)

print("Inside the delay fn : 1")

return "DelayedCalculation 1"

}

print("Delay not yet realised")

let answerNeeded = Int.random(in: 0..<10)

if answerNeeded > 5 {

print("First value is \(calc.deref())")

print("Not executed again \(calc.deref())")

} else {

print("Dont need that heavy calculation!")

}

}

Agents

Agents on the other hand are actually a very natural fit for mobile programming. Essentially the entire GCD framework encourages the use of async actions sent off to some entity in FIFO order. The only, and important different in the Agent abstraction is that it is tied to mutable state and all fns dispatched on it are to update the value from an old to a new value.

func testAgents() {

let agentCount = 10

var agents = [Agent<Int>]()

for _ in 0 ..< agentCount {

agents.append(Agent(withValue: 0))

}

for n in 0 ..< 1_000_000 {

let i = n % agentCount

agents[i].send { old in

old + n

}

}

for i in 0 ..< agentCount {

agents[i].await()

}

let sum = agents.reduce(0) { x, y in

x + y.deref()

}

XCTAssert(sum == 499_999_500_000)

}

Atoms

Atoms are actually quite interesting in that they already have some very low level support via stdatomic.h So if you can imagine the typical scenario of a UI object having a list of message objects which might be added, removed and updated from different threads. And if this object can be wrapped inside of an Atom, concurrent code would be so much simpler and easy to reason about.

func testAtoms() {

var counter = Atom<Int>(withValue: 0)

measure {

label: for _ in 0 ..< 20 {

Thread {

for _ in 0 ..< 400 {

counter.swap { $0 + 1 }

}

}.start()

}

}

Thread.sleep(forTimeInterval: 3)

XCTAssertEqual(counter.deref(), 80000)

}

Refs

This is actually something I am really interested in building in Swift. I have made some initial progress here with a naive implementation of an MVCC STM similar to Clojure’s implementation. The aim here is to take this further and see if I can really build out a proper transaction abstraction for more complicated co-ordinated changes.

As you can see in the code here, we have a Transaction.doSync method which takes a block of code and creates a transaction from it. Accounts here are Ref types which have a set method which changes value and a deref method which returns in-transaction values inside the block and current committed value outside of it. Clojure’s actual implementation is way more complicated in reality with barging and multiple transaction states etc. There’s a wonderful paper and a github repo to go along with it which explains how clojure’s implementation works in Clojure itself.

func testSTMTransactions () {

var accounts: [Ref] = []

let ref1 = Ref(with: ["name": "Rhi-0",

"balance": 100] as! [String: AnyHashable])

accounts.append(ref1)

...

Thread {

while true {

Transaction.doSync {

let account = try accounts[i].deref() as! [String: AnyHashable]

...

}

}

}

Thread {

Transaction.doSync {

let aFrom = assoc(accountFrom, key: "balance", value: 0)

let aTo = assoc(accountTo, key: "balance",

value: (accountTo["balance"] as! Int) + balance)

_ = try accounts[rankFrom].set(value: aFrom)

_ = try accounts[rankTo].set(value: aTo)

}

}

}

Conclusion

- All right, so, what was the end result of these undertakings for me ? Honestly when I started out, I just wanted to solve the problems because they were there and they were a hard enough challenge for me.

- But now that I have attempted them, I think the biggest gain has been the learnings around how 2 very modern but very different programming languages help the programmer write safer, multi-threaded code.

- Concurrency support in iOS is built for async task execution which doesn’t require co-ordination. So its a natural fit for stuff like downloading and processing a bunch of images on background threads, then updating the UI thread with results

- Secondly, given the tools that Swift developers have at our disposal, immutability is more accessible than before. So ensuring that most of your code is dealing with immutable values is a matter of programmer discipline. Make your objects value types, write functions which do very minimal side effects and update global state in very few places and protected behind sane control primitives

java.util.concurrentis a set of utility classes which have largely enhanced the safety of writing multi-threaded programs. Its a battle tested library with excellent support for Executors, Threadpools, concurrent collections like Hashmaps, BlockingQueues etc. If the Swift standard library wants to improve the language support for concurrency, this would be a good starting point. There are some good open source attempts at doing this piecemeal, but I think first class language support is crucial- Immutability is all fine at the fine grained pure functions level, but to solve real problems, our code has to interact with and mutate some state. And for this, Clojure’s notion of having references which can be mutated to point to a different immutable value and having strict rules around its mutation are really solid building blocks. And I think they are Clojure’s secret sauce.

In conclusion I would say I am glad that I did this because understanding how another language solves some problem well gives us immense learning to improve our own language of choice too !